Designing for Agentic AI: The Decision Node Audit and the Evolution of Trust in Autonomous Systems

The rapid ascent of agentic Artificial Intelligence—systems capable of making autonomous decisions to complete multi-step tasks—has introduced a fundamental crisis in user experience design. As these agents move beyond simple chat interfaces to perform complex workflows like insurance processing, legal reviews, and financial trading, the industry is grappling with a phenomenon known as the "transparency gap." Users are frequently left in a state of uncertainty as agents operate in a "black box," disappearing for extended periods before returning with results that may or may not be accurate. To address this, a new methodology known as the Decision Node Audit is emerging as a critical standard for building trust between humans and autonomous systems.

The Transparency Crisis in Autonomous Systems

Current design paradigms for agentic AI often oscillate between two unproductive extremes. The first is the "Black Box" approach, where the system provides no visibility into its internal logic, leaving users to wonder if the AI is hallucinating or skipping critical steps. The second is the "Data Dump," where systems stream every technical log entry and API call, overwhelming the user with noise and leading to "notification blindness."

Industry data suggests that this lack of calibrated transparency is a primary barrier to AI adoption. According to recent surveys on digital trust, nearly 75% of enterprise users express concern over the lack of explainability in AI-driven decisions. When an agentic system fails without providing context, users lose the ability to troubleshoot or intervene, ultimately resulting in the abandonment of the tool.

The challenge for modern designers is to identify the "Goldilocks zone" of transparency—providing enough information to build confidence without sacrificing the efficiency that autonomous agents are intended to provide. This requires a shift from viewing UI as a cosmetic layer to treating it as a functional map of the AI’s internal decision-making process.

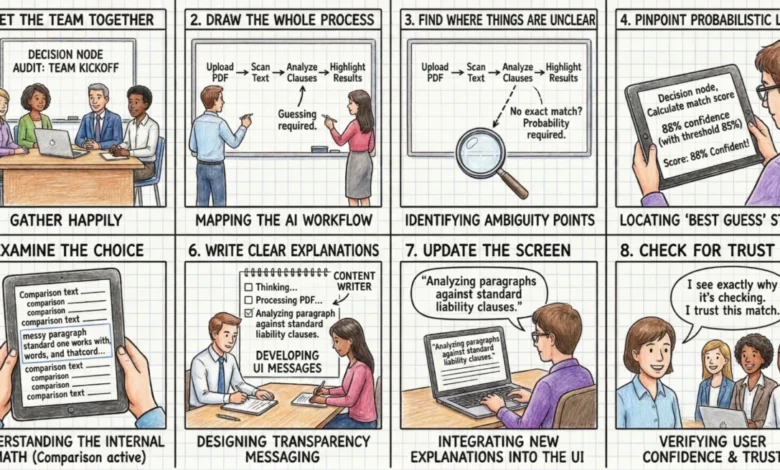

The Decision Node Audit: A New Framework for Logic Mapping

At the heart of the solution is the Decision Node Audit, a collaborative process that brings together designers, engineers, and product managers to map backend logic to the user interface. Unlike traditional software, where logic is often binary (if A, then B), AI systems operate on probabilities. An agent might be 65% certain that a specific document meets compliance standards, or 90% sure that a vehicle image shows a total loss.

The Decision Node Audit involves three primary phases:

- Process Documentation: The team documents every step the AI takes, from the initial trigger to the final output.

- Ambiguity Identification: The team identifies "Ambiguity Points"—moments where the AI must choose between multiple valid paths based on a confidence threshold.

- Transparency Transformation: These hidden technical decisions are converted into "Transparency Moments" in the UI, providing the user with a human-readable update on the AI’s current focus.

For example, a procurement agent reviewing vendor contracts might previously have displayed a generic "Processing" message. Through a Decision Node Audit, the team might identify a specific point where the AI compares liability terms. The UI can then be updated to state: "Liability clause varies from standard template. Analyzing risk level." This specific update informs the user exactly why the system is taking time and where they may need to focus their attention later.

Case Study: Meridian Insurance and the Restoration of Confidence

The real-world implications of this methodology are illustrated by the case of Meridian, a mid-sized insurance firm that implemented agentic AI to process accident claims. Initially, the system allowed users to upload photos and police reports, after which it would display a "Calculating Claim Status" message for sixty seconds.

User testing revealed significant anxiety during this minute of silence. Claimants were unsure if the AI had actually "read" the police reports or if it was merely scanning the images. To resolve this, the Meridian design team conducted a Decision Node Audit and identified three high-probability decision steps: assessing vehicle damage, reviewing the police report for mitigating circumstances, and verifying coverage against the policy database.

By transforming these steps into a sequential transparency display—"Assessing vehicle damage," "Reviewing police report for mitigating circumstances," and "Verifying coverage limits"—the team saw a marked increase in user trust. Although the processing time remained the same, the explicit communication of the agent’s internal workings changed the user’s perception from a period of worry to a period of value creation.

The Impact/Risk Matrix: Prioritizing Visibility

A critical component of the Decision Node Audit is the Impact/Risk Matrix, which helps teams decide which of the dozens of internal events should be visible to the user. Not every API call warrants a notification; doing so would lead to the aforementioned data dump.

The matrix categorizes decisions based on two axes: Impact (the significance of the outcome) and Reversibility (how easily the action can be undone).

- Low Impact / Reversible: These are routine actions, such as renaming a file or sorting a list. These are typically handled with passive logs or "toasts" that do not interrupt the workflow.

- High Impact / Reversible: Actions like moving a sales lead to a different pipeline. These require an "Action Audit" and a clear "Undo" button, allowing the AI to move quickly while keeping the user in control.

- High Impact / Irreversible: Critical actions such as deleting a database or executing a high-value financial trade. These require an "Intent Preview," where the AI pauses and asks for explicit permission before proceeding.

By applying this matrix, teams can prune the 50+ backend events generated during a process down to the three or four most meaningful moments for the user. This ensures that when the UI does speak, the user listens.

Validation through the "Wait, Why?" Protocol

To ensure that the transparency moments align with the user’s mental model, designers utilize qualitative validation techniques like the "Wait, Why?" Test. During this protocol, a user watches the agent complete a task and is asked to speak their thoughts aloud. Any time the user asks "Wait, why did it do that?" or "Is it stuck?", a timestamp is recorded.

These questions serve as indicators of a missing transparency moment. In a study for a healthcare scheduling assistant, researchers found that a four-second static screen caused users to wonder if the system was checking their personal calendar or the doctor’s schedule. By splitting that wait into two distinct, labeled steps—"Checking your availability" and "Syncing with provider schedule"—the designers successfully reduced user anxiety and increased the perceived reliability of the tool.

Operationalizing Transparency: The Integrated Team Approach

Successfully implementing a Decision Node Audit requires a fundamental shift in the design process. It can no longer be a siloed activity where designers hand off static mockups to engineers. Instead, it requires a "transparency matrix"—a shared document where engineers map technical status codes to human-readable strings developed by content designers.

The role of the content designer is particularly vital in this new landscape. While an engineer might suggest a technically accurate status like "Executing function 402," a content designer translates this into "Comparing local vendor prices to secure your delivery." This translation ensures that the technical process is grounded in the user’s actual goals.

Furthermore, this integrated approach requires regular logic reviews. Designers must advocate for the system to expose more granular states, while engineers must ensure that these states are accurately reflected in real-time. This cross-functional collaboration is the cornerstone of designing truly trustworthy AI.

Broader Implications for the AI Industry

As agentic AI continues to permeate sectors like healthcare, law, and finance, the demand for transparency will likely move from a "best practice" to a regulatory requirement. The European Union’s AI Act and other emerging frameworks already emphasize the need for "human-in-the-loop" systems and explainability.

The Decision Node Audit provides a proactive framework for companies to meet these requirements while improving the user experience. By treating trust as a mechanical result of predictable communication rather than an abstract emotional byproduct, organizations can build agents that users are willing to empower with significant autonomy.

In the long term, the success of agentic AI will not be determined solely by the sophistication of its underlying models, but by the clarity of the interfaces that connect those models to human users. The shift from "Black Box" to "Open Experience" represents the next frontier in digital product design, where the primary goal is not just to automate tasks, but to foster a partnership between human intelligence and machine autonomy.