The Site Search Paradox and the Crisis of Digital Findability in Modern User Experience

The digital landscape has reached a critical inflection point where the sheer volume of available information has outpaced the mechanisms designed to navigate it. In the contemporary user experience (UX) ecosystem, success is no longer measured by the depth of a content library, but rather by the findability of that content. Despite the availability of sophisticated data analytics and advanced indexing tools, internal site search remains a primary point of failure for most enterprise and e-commerce platforms. This phenomenon, termed the Site-Search Paradox, describes a scenario where users, frustrated by the inadequacy of a local site’s search bar, abandon the platform to use global search engines like Google to find a specific page on that very same local site.

The implications of this failure are profound. When a user utilizes a "site:domain.com" query on a global engine, the host platform loses control over the user journey, surrenders valuable behavioral data to third parties, and risks losing the customer to a competitor’s advertisement or a more optimized search result. As digital transformation continues to accelerate, the gap between what users expect from a search bar and what internal systems provide has become a multi-million dollar liability for global businesses.

A Historical Chronology of Search Expectations

To understand the current crisis, one must examine the evolution of web navigation over the last three decades. In the mid-1990s, the search bar was a luxury reserved for massive directories. Most websites relied on a "browse-first" philosophy, treating the search function as a literal index—much like the back of a physical textbook. These early systems were built on exact-string matching; if a user did not type the precise terminology used by the author, the system returned a "0 Results Found" error.

By the early 2000s, the rise of Google fundamentally rewired human cognition. Users began to move away from hierarchical navigation (clicking through menus) toward a "search-first" behavior. However, while global search engines invested billions into natural language processing and semantic understanding, internal site search technologies largely stagnated. Many modern enterprise platforms are still running on logic frameworks established twenty years ago, punishing users for typos, plurals, or the use of synonyms.

By 2015, the mobile revolution further exacerbated this tension. On smaller screens, complex navigation menus are often hidden behind "hamburger" icons, making the search bar the primary, and sometimes only, viable way to navigate a site. Today, industry research indicates that users have zero patience for learning a site’s specific taxonomy. They expect a "concierge" experience, yet they are frequently met with a "librarian" who refuses to help unless the user provides the exact ISBN.

The Economic Impact of the Syntax Tax

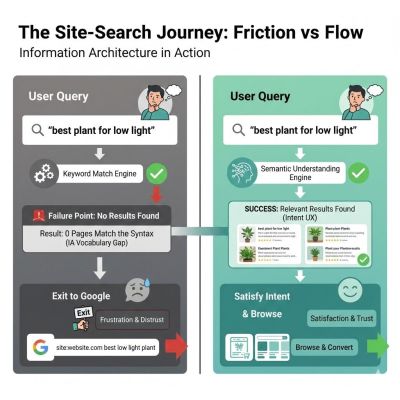

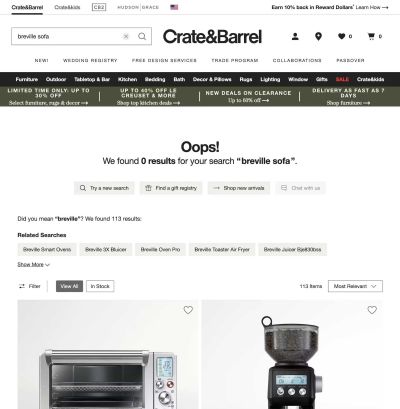

The primary driver of search abandonment is what UX researchers call the "Syntax Tax." This refers to the cognitive load imposed on a user when they are forced to guess the internal vocabulary of a corporation. Data from Origin Growth reveals that approximately 50% of users go directly to the search bar upon landing on a website. When these users encounter a failure—such as searching for a "sofa" on a site that only indexes "couches"—the result is rarely a second attempt with a different word. Instead, it is an immediate exit.

The financial consequences are quantifiable. According to Forrester Research, users who engage with a functional search bar are two to three times more likely to convert into paying customers compared to those who merely browse. Conversely, statistics from Nosto suggest that 80% of users will leave an e-commerce site permanently if the search results are poor. For a high-traffic retail platform, a 1% increase in search abandonment can translate into millions of dollars in lost annual revenue.

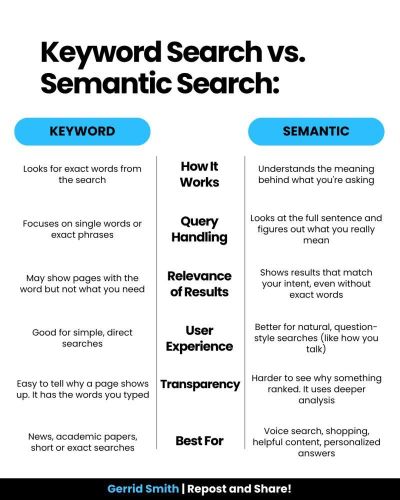

Technical Barriers: String Matching vs. Semantic Understanding

The technical divide between local search and global search lies in the difference between matching "strings" and matching "things." Most internal search engines are configured for keyword matching, which looks for literal sequences of characters. If a user searches for "running shoes," a keyword-based engine might miss a page titled "Men’s Footwear for Jogging."

In contrast, modern semantic search utilizes Information Architecture (IA) techniques such as stemming and lemmatization. Stemming allows the engine to recognize that "running," "runs," and "ran" share the same root, while lemmatization understands the context of a word within a sentence. Furthermore, the Baymard Institute reports that 41% of e-commerce sites fail to support basic symbols or abbreviations (e.g., " and inch, or lb and pound). This lack of "fuzzy matching" creates a rigid environment where human error—a universal constant—results in a total breakdown of the user experience.

Case Studies in Information Architecture Failures

The "Curse of Knowledge" often blinds internal teams to the flaws in their own systems. A recent analysis of a major financial institution’s digital portal revealed a significant disconnect between user intent and site taxonomy. The institution’s support center was overwhelmed by calls from customers who claimed they could not find "loan payoff" information online.

Internal search logs showed that "loan payoff" was the most searched term on the site, yet it consistently yielded zero results. The reason was purely architectural: the bank’s internal team had labeled all relevant documentation under the formal legal term "Loan Release." To the bank, a "payoff" was a process, but a "release" was the official document. Because the search engine lacked a controlled vocabulary or a synonym library, it could not bridge the gap between the customer’s language and the corporate jargon.

Similarly, a large-scale technical enterprise with over 5,000 documents found its internal search to be useless because the "Title" tags in the database were SKU numbers (e.g., "PROD-882-X") rather than human-readable names. Users searching for "installation guide" were ignored by the engine because the phrase did not exist in the metadata. By implementing a human-centered taxonomy and mapping SKUs to common search queries, the company reduced its search-page exit rate by 40% within 90 days.

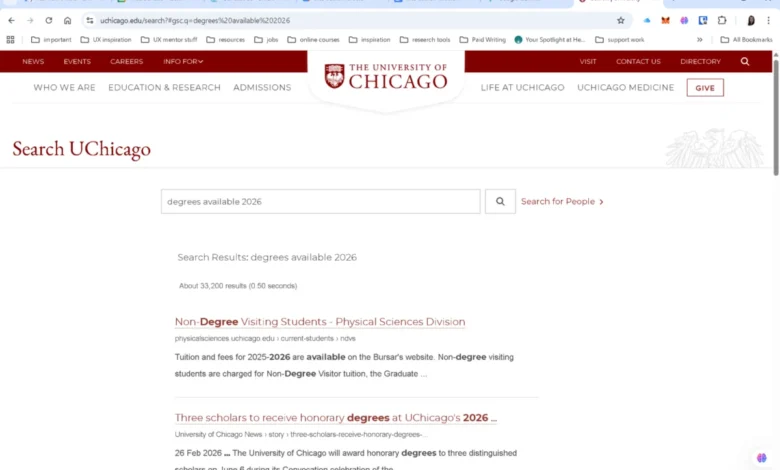

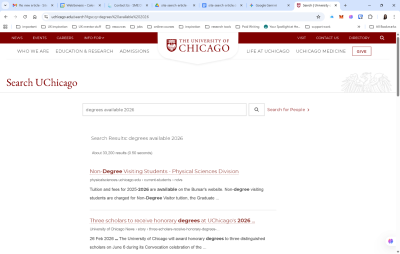

The Risks of Outsourcing Search to Google

In an attempt to bypass these complex IA challenges, many institutions, such as the University of Chicago and various government agencies, have implemented "Google-powered" search bars. While this provides an immediate boost in raw search power, it represents a strategic surrender.

Industry analysts warn that delegating the search experience to a third-party algorithm carries significant risks:

- Loss of Brand Control: Users are often exposed to third-party advertisements or "suggested results" that lead away from the site.

- Data Blindness: The organization loses the ability to analyze search logs, which are the most direct way to understand customer needs.

- Inability to Prioritize: A site owner cannot "boost" specific high-value pages or new product launches within a global algorithm.

- User Habituation: It trains the user to believe that the site’s own organization is incompetent, encouraging them to start their next journey on Google rather than the brand’s homepage.

A Framework for Reclaiming the Search Experience

To solve the Site-Search Paradox, organizations must transition from a "set it and forget it" technical implementation to a continuous "search-as-a-product" mindset. This involves a four-phase audit and optimization framework:

Phase 1: The Zero-Result Diagnostic

Organizations must analyze search logs specifically for queries that returned no results. These are typically categorized into three buckets: typos that require "fuzzy matching," synonyms that require a controlled vocabulary, and genuine content gaps that require the creation of new pages.

Phase 2: Intent Mapping

Not all searches are equal. A navigational search (e.g., "login") should bypass the results page entirely and take the user to the destination. An informational search (e.g., "how to return a package") should prioritize help articles, while a transactional search (e.g., "blue water bottle") should prioritize product listings with filters for size and price.

Phase 3: Semantic Scaffolding

Rather than returning a flat list of links, a modern search interface should provide context. If a user searches for a specific laptop model, the results should include the product page, the latest firmware drivers, user manuals, and related accessories. This "associative" approach mimics human thought patterns and increases the "stickiness" of the site.

Phase 4: Probabilistic Design

UX designers must move away from the binary "Results Found/No Results Found" model. Interfaces should be designed for "confidence levels," using "Did you mean?" prompts and "Partial Match" suggestions to keep the user in the flow even when an exact match is unavailable.

The Future of Site Search: The AI Integration

As we look toward the future, the integration of Large Language Models (LLMs) and Generative AI is set to redefine site search once again. Users are beginning to expect "answer engines" rather than "link engines." The next generation of internal search will not just point to a document; it will synthesize the information within that document to provide a direct answer.

However, the foundation of these AI tools remains high-quality Information Architecture. An AI is only as effective as the data it can access. Without robust metadata, structured content, and a clear understanding of user intent, even the most expensive AI implementation will fail to solve the fundamental problem of findability.

Conclusion: Search as a Strategic Conversation

The search box is the only place on a digital platform where the user speaks directly to the brand. It is a moment of explicit intent and high vulnerability. When a brand fails to understand a user’s query, it is not merely a technical error; it is a failure of empathy and a breach of the customer relationship.

By investing in human-centered Information Architecture and moving beyond literal string matching, organizations can reclaim their search boxes from global giants. The goal is to transform the search bar from a digital dead-end into a sophisticated concierge that understands not just what the user typed, but what they actually need. In the modern economy, findability is the ultimate competitive advantage.