Europe’s Scientific Powerhouse: Unveiling MareNostrum V, A Hybrid Classical-Quantum Supercomputing Marvel

Nestled amidst the historic campus of the Polytechnic University of Catalonia in Barcelona, where a stroll might lead one to the serene Torre Girona chapel, stands a testament to modern scientific ambition. This 19th-century chapel, with its soaring arches and stained glass, now houses a unique historical exhibit: the original 2004 racks of MareNostrum, Europe’s first major supercomputer. However, the true successor, MareNostrum V, a behemoth of computational power and one of the fifteen most potent supercomputers globally, operates in a dedicated, meticulously cooled facility adjacent to this architectural anachronism, symbolizing a profound convergence of heritage and cutting-edge innovation.

The journey to MareNostrum V is a story of continuous evolution, reflecting Europe’s strategic commitment to high-performance computing (HPC) and scientific sovereignty. The Barcelona Supercomputing Center (BSC), established in 2005, has been at the forefront of this endeavor, consistently pushing the boundaries of computational science. The MareNostrum series began with MareNostrum I, inaugurated in 2004, which quickly became a symbol of European scientific prowess. Each subsequent iteration—MareNostrum II, III, and IV—saw exponential increases in processing power, cooling efficiency, and architectural sophistication, driven by the escalating demands of climate modeling, biomedical research, engineering simulations, and fundamental physics. MareNostrum V, inaugurated in December 2023, represents the pinnacle of this lineage, a €200 million investment co-funded by the EuroHPC Joint Undertaking (EuroHPC JU), Spain, Portugal, and Turkey, designed to address the grand challenges facing humanity.

For data scientists accustomed to the flexible, on-demand resources of commercial cloud platforms like AWS EC2 instances or distributed frameworks such as Spark and Ray, the operational paradigm of a supercomputer like MareNostrum V presents an entirely different challenge. HPC at this scale is a meticulously engineered ecosystem governed by distinct architectural principles, specialized schedulers, and a level of operational rigor that is truly difficult to comprehend without direct engagement. Recently, researchers had the opportunity to leverage MareNostrum V’s immense capabilities to generate vast quantities of synthetic data for advanced machine learning surrogate models, offering a rare glimpse into the operational mechanics of this computational leviathan.

The Architecture: Engineering for Unprecedented Scale

The fundamental misconception when approaching HPC is to envision a single, extraordinarily powerful computer. In reality, a supercomputer functions as a massive, tightly integrated cluster of thousands of independent computing units, all interconnected by an incredibly fast, low-latency network. This distributed architecture is paramount, as the network itself becomes the computer. Any data scientist who has grappled with the bottlenecks of transferring massive data batches between distributed GPUs in cloud environments understands that network performance is not merely a feature but the very foundation of efficient parallel processing.

To mitigate potential data transfer bottlenecks, MareNostrum V employs an InfiniBand NDR200 fabric, arranged in a sophisticated fat-tree topology. Unlike conventional office networks where bandwidth can become congested as multiple devices vie for access through a central switch, a fat-tree topology dynamically increases bandwidth at higher levels of the network hierarchy. This design effectively "thickens" the network branches closer to the "trunk," ensuring non-blocking bandwidth. This means that any of MareNostrum V’s 8,000 nodes can communicate with any other node with minimal, consistent latency, a critical feature for applications requiring extensive inter-node communication. The InfiniBand NDR200, capable of 200 Gigabits per second (Gb/s) per port, provides the necessary speed and low latency to orchestrate complex parallel tasks across the entire machine.

MareNostrum V’s computational power is strategically divided into two primary partitions, each optimized for specific workloads:

- General Purpose Partition (GPP): This partition is engineered for highly parallel CPU-intensive tasks, forming the backbone for a wide array of scientific simulations. It comprises 6,408 nodes, each equipped with 112 Intel Sapphire Rapids cores. Intel’s 4th Gen Xeon Scalable processors (Sapphire Rapids) are designed for data center and HPC workloads, offering significant improvements in core count, memory bandwidth, and integrated accelerators for AI and scientific computing. The GPP delivers a combined peak performance of 45.9 Petaflops (PFlops), making it suitable for general-purpose scientific computing, data analysis, and large-scale simulations that benefit from massive CPU parallelism.

- Accelerated Partition (ACC): This specialized partition is tailored for computationally demanding applications such as AI training, deep learning, molecular dynamics, and complex material science simulations. It features 1,120 nodes, each housing four NVIDIA H100 SXM GPUs. The NVIDIA H100 Tensor Core GPU, based on the Hopper architecture, represents the pinnacle of AI and HPC acceleration, offering unparalleled performance for large language models, scientific simulations, and data analytics. With a retail cost of approximately $25,000 per H100, the GPU investment alone for this partition exceeds $110 million. The ACC partition boasts a much higher peak performance, reaching up to 260 PFlops, underscoring the transformative power of GPU acceleration in modern supercomputing.

Beyond these core compute partitions, MareNostrum V incorporates Login Nodes, which serve as the secure entry points for users via SSH. These nodes are strictly for lightweight tasks such as file transfers, code compilation, and submitting job scripts to the workload manager. Crucially, they are not intended for computational tasks, as executing heavy processes on them would degrade performance for all users and risk system instability.

Integrating Quantum Computing: A Hybrid Future

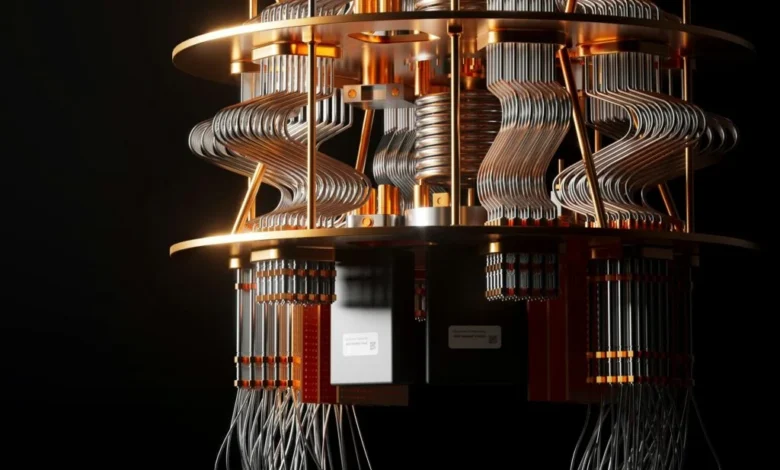

A significant recent development at MareNostrum V is the physical and logical integration of Spain’s first quantum computers. This groundbreaking initiative includes a digital gate-based quantum system and the newly acquired MareNostrum-Ona, a state-of-the-art quantum annealer utilizing superconducting qubits. This integration marks a pivotal step towards a hybrid classical-quantum computing paradigm. Rather than outright replacing the classical supercomputer, these Quantum Processing Units (QPUs) function as highly specialized accelerators. When the classical supercomputer encounters optimization problems of extreme complexity, or quantum chemistry simulations that would overwhelm even the powerful H100 GPUs, it can offload these specific, intractable calculations to the quantum hardware. This synergy promises to unlock solutions to problems currently beyond the reach of even the most powerful classical supercomputers, creating a massive hybrid classical-quantum computing powerhouse for Europe. The development and integration of MareNostrum-Ona align with Europe’s broader quantum strategy, aiming to establish a robust quantum ecosystem and foster innovation in fields like drug discovery, materials science, and cryptography.

Operational Realities: Navigating the HPC Ecosystem

Understanding the sophisticated hardware is only one aspect of leveraging a supercomputer; the operational protocols are equally critical and markedly different from commercial cloud environments. As a shared, publicly funded scientific resource, MareNostrum V operates under stringent security and resource allocation rules to ensure fair access and data integrity.

- The Airgap: One of the most significant adjustments for data scientists transitioning to HPC is the enforced network isolation. While external access to the supercomputer is possible via SSH for login, the compute nodes are entirely airgapped—they have no outbound internet connection. This means no

pip installfor missing libraries, nowgetfor external datasets, and no direct connection to external repositories like HuggingFace during job execution. All necessary software, libraries, and datasets must be pre-downloaded, compiled, and securely staged within the user’s allocated storage directory before a job is submitted. While initially daunting, this restriction is mitigated by the MareNostrum administrators, who provide a comprehensive suite of pre-installed libraries and software accessible via a module system. - Data Movement: Due to the airgap, data ingress and egress are managed through

scporrsyncvia the login nodes. Raw datasets are securely pushed onto the system, processed by the compute nodes, and then the results (e.g., processed tensors, simulation outputs) are pulled back to the user’s local machine for post-processing and visualization. Paradoxically, the sheer speed of computation on MareNostrum V can often make the data transfer process—moving results back to a local environment—the primary bottleneck. - Limits and Quotas: Resource allocation on a supercomputer is meticulously managed. Users cannot arbitrarily launch thousands of jobs and monopolize the system. Each research project is assigned a specific CPU-hour budget, and individual users face strict limits on the number of concurrent jobs they can run or queue. Furthermore, every submitted job requires a precisely defined wall-time limit. The scheduler operates ruthlessly: if a job requests two hours of compute time and attempts to run for two hours and one second, it will be unceremoniously terminated mid-calculation to free up resources for the next scheduled task. This strict enforcement necessitates meticulous code optimization and realistic time estimations.

- Logging in the Dark: Unlike interactive cloud sessions, supercomputer jobs are typically submitted to a scheduler and run in a batch process. There is no live terminal output to monitor during execution. Instead, all standard output (

stdout) and standard error (stderr) are automatically redirected into dedicated log files (e.g.,sim_12345.out,sim_12345.err). Upon job completion or in the event of a crash, researchers must meticulously review these text files to verify results or debug their code. Tools likesqueueallow users to monitor job status, andtail -fon log files can provide near real-time updates for running jobs.

Understanding SLURM Workload Manager: The Conductor of Compute

Upon successfully logging into MareNostrum V via SSH, users are greeted by a standard Linux terminal prompt. This seemingly unremarkable interface belies the immense power it connects to. There are no elaborate graphical interfaces or flashing lights; the complexity is managed beneath the surface. Directly executing heavy Python or C++ scripts on the login node is strictly forbidden, as it would quickly overload the shared resource and elicit a swift, albeit polite, intervention from system administrators.

Instead, HPC environments rely on sophisticated workload managers like SLURM (Simple Linux Utility for Resource Management). SLURM is an open-source job scheduler widely adopted across many computer clusters and supercomputers. Researchers craft bash scripts that precisely define their hardware requirements, specify software environments to be loaded, and outline the code to be executed. SLURM then places the job in a queue, intelligently identifies available hardware resources, executes the code, and releases the nodes upon completion.

Interacting with SLURM primarily involves embedding #SBATCH directives at the top of submission scripts. These directives act as a comprehensive shopping list for resources, allowing users to specify:

--job-name: A descriptive name for the job.--outputand--error: Paths forstdoutandstderrlog files.--qos: Quality of Service, which dictates priority and resource limits (e.g.,gp_debugfor shorter, higher-priority runs).--time: The maximum wall-time duration for the job (e.g.,00:30:00for 30 minutes).--nodes: The number of compute nodes required.--ntasks: The total number of tasks (processes) to be launched across the allocated nodes.--cpus-per-task: The number of CPU cores to allocate per task.--mem: The amount of memory required per node or per CPU.--partition: Specifies which partition (GPP or ACC) to use.--account: Identifies the project account to which compute hours will be charged.--dependency: Allows chaining jobs together, ensuring sequential execution or dependencies on prior job outcomes.

A Practical Example: Orchestrating an OpenFOAM Sweep

To illustrate the practical application of MareNostrum V, consider a common research scenario: building a machine learning surrogate model for aerodynamic downforce. This requires a substantial dataset derived from numerous high-fidelity computational fluid dynamics (CFD) simulations across various 3D meshes.

A typical SLURM job script for a single OpenFOAM CFD case on the General Purpose Partition might look like this:

#!/bin/bash

#SBATCH --job-name=cfd_sweep

#SBATCH --output=logs/sim_%j.out

#SBATCH --error=logs/sim_%j.err

#SBATCH --qos=gp_debug

#SBATCH --time=00:30:00

#SBATCH --nodes=1

#SBATCH --ntasks=6

#SBATCH --account=nct_293

module purge

module load OpenFOAM/11-foss-2023a

source $FOAM_BASH

# MPI launchers handle core mapping automatically

srun --mpi=pmix surfaceFeatureExtract

srun --mpi=pmix blockMesh

srun --mpi=pmix decomposePar -force

srun --mpi=pmix snappyHexMesh -parallel -overwrite

srun --mpi=pmix potentialFoam -parallel

srun --mpi=pmix simpleFoam -parallel

srun --mpi=pmix reconstructParThis script specifies the job’s name, log file locations, quality of service, wall-time, resource allocation (1 node, 6 tasks), and project account. It then loads the necessary OpenFOAM module and executes a sequence of parallel CFD commands using srun to leverage the allocated tasks.

To manage a sweep of 50 such simulations without manually submitting each one and potentially overwhelming the scheduler, researchers utilize SLURM’s dependency chaining. This orchestrator script efficiently queues a sequence of jobs:

#!/bin/bash

PREV_JOB_ID=""

for CASE_DIR in cases/case_*; do

cd $CASE_DIR

if [ -z "$PREV_JOB_ID" ]; then

OUT=$(sbatch run_all.sh)

else

OUT=$(sbatch --dependency=afterany:$PREV_JOB_ID run_all.sh)

fi

PREV_JOB_ID=$(echo $OUT | awk 'print $4')

cd ../..

doneThis script iterates through each simulation case directory, submitting a job and ensuring that each subsequent job only begins afterany (after the successful or failed completion) of the previous one. This creates an automated, robust data pipeline. A researcher can initiate this chain of 50 jobs in mere seconds, allowing the supercomputer to process the aerodynamic evaluations overnight, yielding processed, logged results ready for machine learning training by the next morning.

Parallelism Limits: The Inexorable Law of Amdahl

A common query from newcomers to HPC is why, with 112 cores per node, a CFD simulation might only request 6 tasks. The answer lies in Amdahl’s Law, a fundamental principle in parallel computing. This law states that the theoretical speedup achievable by executing a program across multiple processors is inherently limited by the fraction of the code that must be executed serially (i.e., cannot be parallelized). Mathematically, it is expressed as:

[ S = frac1(1-p) + fracpN ]

Where (S) is the overall speedup, (p) is the proportion of the code that can be parallelized, (1-p) is the strictly serial fraction, and (N) is the number of processing cores.

The presence of the ((1-p)) term in the denominator imposes an insurmountable ceiling on speedup. Even if only 5% of a program is fundamentally sequential, the maximum theoretical speedup, regardless of how many cores are thrown at it, is limited to 20x. Beyond this theoretical limit, practical considerations like communication overhead become significant. Dividing a task across an excessive number of cores can increase the time spent transferring data and synchronization messages across the InfiniBand network. If cores spend more time communicating boundary conditions than performing actual computations, adding more hardware can paradoxically slow the program down. This compute-to-communication ratio is a critical factor in optimizing code for supercomputers; balancing these elements is key to achieving optimal performance.

Access and Broader Implications for European Science

Despite the staggering cost of its hardware, MareNostrum V operates as a publicly funded scientific resource, with access granted free of charge to researchers. For those affiliated with Spanish institutions, applications are processed through the Spanish Supercomputing Network (RES). For researchers across the broader European landscape, the EuroHPC Joint Undertaking (EuroHPC JU) regularly issues calls for access. Their "Development Access" track is particularly relevant for data scientists, specifically designed to support projects involving code porting, benchmarking machine learning models, and exploring novel computational approaches. This accessibility underscores Europe’s commitment to fostering a vibrant research ecosystem and democratizing access to cutting-edge computational tools.

The strategic importance of MareNostrum V extends far beyond its raw computational power. It solidifies Europe’s position in the global supercomputing race, providing critical infrastructure for scientific discovery and technological innovation. Official statements from the Barcelona Supercomputing Center and the EuroHPC JU have consistently emphasized the supercomputer’s role in advancing research in critical areas such as climate change prediction, personalized medicine, energy efficiency, and the development of trustworthy artificial intelligence. Professor Mateo Valero, Director of the BSC, has frequently highlighted the center’s mission to "serve society by applying our knowledge to improve people’s lives," with MareNostrum V being a cornerstone of this commitment. Similarly, the EuroHPC JU views such machines as vital for European digital sovereignty, reducing reliance on external computing resources and fostering a robust domestic HPC ecosystem. The integration of quantum capabilities further positions Europe at the forefront of emerging computing paradigms, attracting top talent and driving economic growth through innovation.

When researchers confront that unassuming SSH prompt, it is easy to overlook the immense infrastructure it represents: 8,000 nodes, a fat-tree fabric orchestrating messages at 200 Gb/s, and a sophisticated scheduler coordinating hundreds of concurrent jobs from scientists across multiple nations. The intuitive, yet misleading, mental model of a "single powerful computer" often persists. However, it is this complex, distributed reality that underpins modern scientific computing, making previously intractable problems solvable and pushing the boundaries of human knowledge. MareNostrum V is not just a machine; it is a symbol of European scientific ambition, a crucible for innovation, and a gateway to future discoveries.

References

[1] Barcelona Supercomputing Center, MareNostrum 5 Technical Specifications (2024), BSC Press Room.

[2] EuroHPC Joint Undertaking, MareNostrum 5 Inauguration Details (2023), EuroHPC JU.