Meta Unveils V-JEPA 2.1: A Breakthrough in AI’s Understanding of the Physical World

Meta AI researchers have introduced V-JEPA 2.1, the latest iteration of their groundbreaking video world model, marking a significant leap forward in equipping artificial intelligence with a more nuanced understanding of the physical environment. This new model directly addresses a long-standing challenge in AI development: the difficulty in simultaneously capturing both the broad dynamics of a video sequence and the intricate, fine-grained spatial details essential for precise physical interactions. V-JEPA 2.1 achieves this critical balance through a suite of innovative architectural enhancements, a refined training methodology, and an expanded, diverse training dataset.

Early experimental results highlight V-JEPA 2.1’s superior performance across a range of critical applications, demonstrating significantly improved and faster outcomes in areas such as robotic grasping, autonomous navigation, predicting complex object interactions, and estimating 3D depth from 2D visual inputs. These advancements are not merely incremental; they represent foundational steps toward unlocking a new generation of AI applications that can seamlessly operate and interact within the unpredictable complexities of the physical world, moving beyond confined digital spaces.

The Enduring Challenge of State Estimation in World Models

For artificial intelligence systems to truly engage with and navigate the dynamic, unpredictable physical world, they require sophisticated "world models." These models are essentially an AI’s internal representation of reality, enabling it to accurately perceive its surroundings, anticipate future events, and formulate effective plans of action. At the heart of constructing such robust world models lies the formidable "state-estimation" problem. This involves teaching an AI how to process raw, often noisy, low-level perceptual inputs—such as the deluge of pixels from a camera feed—and distill them into a reliable, structured, and comprehensive summary of the current state of the world. This is akin to a human observing a scene and instantly understanding the objects present, their positions, their movements, and their potential interactions.

One of the most promising avenues for tackling the state estimation challenge has been self-supervised learning from video data. Unlike traditional supervised learning, which heavily relies on human annotators to painstakingly label every object, depth layer, or action within a dataset, self-supervised learning empowers models to learn directly from the raw data itself. By designing tasks where the model predicts missing parts of the data or relationships within it, these systems can, when properly designed, implicitly learn rich representations that encapsulate the fundamental rules governing reality. This includes discerning scene geometry, understanding object dynamics, and inferring intrinsic physical properties without explicit human guidance for every detail. The pioneering work of researchers like Yann LeCun, Meta’s Chief AI Scientist, has long championed self-supervised learning as a crucial pathway to achieving human-level intelligence in machines, particularly for embodied AI that interacts with the physical world.

Despite the rapid strides made in this burgeoning field, a significant hurdle has persisted. Developing a single AI model capable of simultaneously capturing the broad, global dynamics of an entire scene—crucial for high-level tasks like action recognition—while also preserving fine-grained, dense spatio-temporal structures—essential for precise tracking, localization, and accurate geometry extraction throughout visual sequences—has proven exceedingly difficult.

Historically, the AI community has gravitated towards two distinct, often diverging, approaches, each with its own inherent limitations. On one side are "video-first" models, exemplified by earlier iterations of the V-JEPA family, including V-JEPA 2. Joint Embedding Predictive Architectures (JEPA) have demonstrated remarkable efficacy in achieving global video understanding. They excel in environments where modeling motion and temporal dynamics is paramount, making them highly promising candidates for embodied agents that need to predict and plan future actions. However, their primary drawback has been their learned representations’ struggle to extract and retain fine-grained, local spatial structures. This makes them less suitable for tasks demanding pixel-perfect details, such as precisely delineating object boundaries or understanding subtle textural variations.

Conversely, "image-first" models, such as Meta’s DINO (DINOv2, DINOv3), occupy the other end of the spectrum. These approaches, primarily trained on static images, are renowned for yielding exceptionally high-quality, dense features that are ideal for precise object detection and segmentation. Their strength lies in static spatial understanding. The inherent limitation, however, is that because these models are trained predominantly on still images, they do not intrinsically learn temporal dynamics or motion from video sequences. This forces developers into a difficult compromise: choose an AI that comprehends how things move but sacrifices precise spatial detail, or an AI that understands where things are but lacks a deep grasp of motion and change over time. V-JEPA 2.1 aims to transcend this dilemma.

The Architecture of V-JEPA 2 and Its Predecessors

To fully appreciate the innovations embedded within V-JEPA 2.1, it’s essential to first understand the foundational principles and limitations of its predecessor, V-JEPA 2. The V-JEPA 2 model operates on a mask-denoising objective to learn its latent representations. Conceptually, this process involves taking a video, dissecting it into numerous small patches across both spatial and temporal dimensions, and then intentionally obscuring a significant portion of these patches from the model’s view. The model’s core task is then to predict the abstract, mathematical representations (embeddings) of these hidden patches, relying solely on the information gleaned from the visible, unmasked patches.

This predictive task is executed through a two-part system. Initially, an encoder component processes the visible video patches, transforming them into context tokens—condensed numerical representations of the observable information. Subsequently, a predictor module receives these context tokens. Crucially, it also incorporates "blank mask tokens" that carry vital positional and temporal information about where and when the missing patches originally belonged. Armed with this context and positional cues, the predictor attempts to generate the correct representations for the obscured sections. The generated representations are then compared against the actual encoder values derived from the unmasked segments of the original video, serving as a self-supervised learning signal.

During the training phase, a loss function is employed to quantify the discrepancy between the model’s predictions and the true representations of the masked patches. This loss function then penalizes the model for inaccurate predictions, guiding it to refine its internal understanding. However, a critical limitation in V-JEPA 2 was that this loss was exclusively applied to the masked tokens. The model received no explicit supervision or corrective feedback on how it encoded the visible context tokens. This lack of direct grounding for the visible patches allowed the model to take "shortcuts." It wasn’t explicitly compelled to anchor these visible patches in their exact local, spatial reality, leading to a diminished capacity to capture important fine-grained details within the videos, such as the precise boundaries between objects. This often manifested as somewhat grainy or imprecise segmentation of objects, preventing the high-fidelity spatial understanding seen in image-first models.

V-JEPA 2.1: A Paradigm Shift in Architectural Innovation

To overcome the "shortcut problem" and other limitations of previous models, the researchers at Meta meticulously rebuilt the V-JEPA architecture, integrating four major innovations that redefine its capabilities.

-

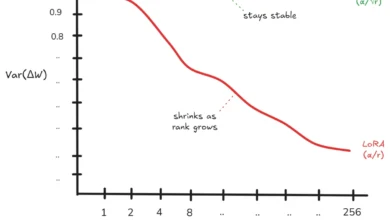

Dense Predictive Loss: The most significant change is the introduction of a "dense predictive loss." Instead of merely evaluating the model’s predictions on the hidden video patches, V-JEPA 2.1 applies this loss to all tokens—both the masked segments it needs to predict and the visible segments it uses for context. This fundamental shift forces the model to meticulously ground every single token in its precise spatial and temporal location. By holding the model accountable for the representations of all input elements, it is compelled to learn higher quality, more accurate, and more detailed representations across the entire visual field. This prevents the model from developing simplistic, ungrounded encodings for the visible parts of the input, directly addressing the "shortcut" issue.

-

Deep Self-Supervision: Traditionally, a model’s loss is calculated only at the very final layer of its processing network. V-JEPA 2.1 breaks from this convention by introducing "deep self-supervision," which applies the dense predictive loss hierarchically at multiple intermediate layers of the encoder. This multi-level supervision ensures that fine-grained local spatial information is not lost or diluted as it propagates through the network. Instead, it is actively preserved and reinforced at various stages, allowing it to flow more effectively and directly into the final layers. This hierarchical grounding significantly improves the model’s performance across both fine-grained perception tasks (like segmentation) and high-level vision tasks (like action recognition), as the model builds a more robust, multi-resolution understanding of the visual data.

-

Modality-Specific Tokenizers: Earlier video models often handled static images in an inefficient and suboptimal manner, typically by duplicating an image multiple times (e.g., 16 times) to mimic a short video clip. This method was not only computationally wasteful but also inherently confusing for the model, forcing it to treat static images as if they contained temporal motion. V-JEPA 2.1 introduces "modality-specific tokenizers." It employs a specialized 2D processor designed to efficiently and accurately handle static images and a distinct 3D processor optimized for processing dynamic video sequences. Crucially, both of these specialized tokenizers feed into a single, shared encoder. This elegant solution allows the model to process both images and videos in their native formats, eliminating computational redundancy and confusion, leading to more efficient and accurate learning across diverse visual inputs.

-

Massive Scaling of Training Data and Model Size: The researchers also rigorously demonstrated that these architectural upgrades could scale effectively to unprecedented levels. They significantly expanded the training data, incorporating a colossal 163 million images and videos. Concurrently, the model’s size was dramatically increased, from 300 million parameters in V-JEPA 2 to an impressive 2 billion parameters in the flagship V-JEPA 2.1 variant. This synergistic combination of architectural innovation, refined training, and massive scaling has been instrumental in achieving across-the-board performance gains in real-world downstream applications, showcasing the true power of these foundational changes.

The V-JEPA 2.1 family comprises multiple variants, all trained on this vast dataset to cater to different computational requirements. The flagship model, ViT-G, boasts 2 billion parameters and delivers the highest performance. A slightly more compact yet highly capable model, ViT-g, operates with 1 billion parameters. Furthermore, the researchers leveraged model distillation techniques to compress the vast knowledge of the large ViT-G model into smaller, highly efficient variants. These include ViT-L, a distilled model with 300 million parameters, and ViT-B, the most lightweight variant, featuring 80 million parameters, making V-JEPA 2.1 accessible for a wider range of applications and hardware constraints.

V-JEPA 2.1 in Action: Unprecedented Performance Benchmarks

The researchers conducted extensive evaluations, pitting V-JEPA 2.1 against the industry’s leading visual AI models, including its direct predecessor V-JEPA 2, other prominent video models like InternVideo2, and powerful image foundation models such as DINOv2 and the 7-billion parameter DINOv3. The results unequivocally demonstrated V-JEPA 2.1’s superior capabilities across multiple challenging benchmarks.

Robotic Grasping and Manipulation: In practical tests, V-JEPA 2.1 was deployed in a zero-shot setting to control a table-top Franka Panda robotic arm, performing complex tasks such as reaching, grasping, and pick-and-place operations. The model achieved a remarkable 20 percent improvement in grasping success rate over V-JEPA 2. Previous models frequently failed due to an inadequate comprehension of 3D depth, causing them to close their grippers prematurely, or open them mid-transit and drop the object. V-JEPA 2.1’s profoundly rich, pixel-level depth understanding now enables robots to physically interact with objects with unprecedented fluidity, precision, and reliability, moving closer to human-like dexterity in cluttered environments. This has immediate implications for automation in manufacturing, logistics, and even delicate surgical procedures.

Autonomous Navigation: For autonomous navigation tests, the model was tasked with navigating towards a visual goal using latent world models on challenging datasets like Tartan Drive, Scand, and Sacson. V-JEPA 2.1 achieved state-of-the-art trajectory accuracy on Tartan Drive, while simultaneously demonstrating a breathtaking 10 times faster planning speed than previous records. It dramatically reduced the required internal simulation steps from 128 down to just 8, consequently slashing planning time from over 100 seconds to a mere 10.6 seconds. This exponential increase in speed and precision has profound implications for time-sensitive autonomous applications, such as emergency response drones navigating disaster zones, self-driving vehicles making split-second decisions in dynamic traffic, or exploration robots in unknown terrains.

Forecasting Human Actions and Object Interactions: The model’s predictive prowess was further tested on forecasting human actions using first-person video datasets like Ego4D and EPIC-KITCHENS-100. V-JEPA 2.1 showed massive improvements over incumbent models on both benchmarks, registering a 35 percent relative improvement over the previous state-of-the-art in Ego4D and establishing a new record on EPIC-KITCHENS. This capability translates directly into advancements for augmented reality (AR) applications, where an AI assistant could accurately predict a user’s next action and provide real-time information or interventions exactly when and where they are needed. It also paves the way for more sophisticated collaborative AI systems, where robots can anticipate human movements and intentions in shared workspaces, enhancing safety and efficiency.

3D Depth Estimation and Object Segmentation: In evaluating the model’s ability to map 3D geometric structures from 2D images using the NYUv2 dataset and define strict object boundaries on the ADE20K dataset, V-JEPA 2.1 demonstrated a drastic improvement over V-JEPA 2. Remarkably, it even outperformed the much larger DINOv3 model, which has 7 billion parameters, on the crucial task of depth estimation. This capability is paramount for safety-critical applications like self-driving cars, where instantly distinguishing between a flat painting of a person on the side of a truck and a real 3D pedestrian crossing the street is a matter of life and death. For mixed-reality headsets, accurate 3D understanding is fundamental to seamlessly blending virtual objects with the real world, creating truly immersive and believable experiences.

Object Tracking and Global Action Recognition: The model was also assessed on its capacity to track a specific object across frames using the YouTube-VOS dataset and perform global action recognition via the Something-Something-v2 benchmark. V-JEPA 2.1 performed impressively, setting a new record on Something-Something-v2. In dynamic applications such as sports broadcasting, security surveillance, or industrial monitoring, subjects often move rapidly, change shape, and become temporarily obscured behind other objects. V-JEPA 2.1’s features are robustly and temporally consistent, meaning a camera system could, for instance, lock onto a specific hockey player and maintain a flawless tracking mask through rapid camera pans, occlusions, and visual distractions, providing continuous, high-fidelity data.

Broader Implications and Future Outlook

The launch of V-JEPA 2.1 represents more than just an incremental update; it is a foundational step towards building truly intelligent embodied AI systems that can reason about and interact with the physical world in ways previously considered science fiction. By bridging the critical gap between global temporal dynamics and local spatial precision, Meta has pushed the boundaries of what self-supervised learning can achieve in visual understanding.

This breakthrough aligns perfectly with Meta’s long-term vision for AI, particularly in the context of the metaverse and advanced robotics. The ability for AI to intuitively understand physical reality—its objects, their properties, their movements, and their interactions—is indispensable for creating compelling virtual worlds, realistic augmented reality experiences, and highly capable robotic assistants. Yann LeCun’s consistent advocacy for self-supervised learning and world models as the path to more generalizable and intelligent AI underscores the strategic importance of V-JEPA 2.1 within Meta’s research roadmap.

However, the researchers acknowledge that there is still substantial work ahead. The current iteration of V-JEPA 2.1 is heavily concentrated on learning superior visual representations. While earlier work with V-JEPA 2 explored the construction of complete world models built upon these representations, fully realizing comprehensive world models that leverage the new dense prediction capabilities of V-JEPA 2.1 remains an ongoing and exciting area of research. Future work will likely involve integrating multi-modal inputs (e.g., touch, sound, proprioception), enabling richer causal reasoning, and developing more sophisticated planning capabilities that can fully exploit the detailed environmental understanding provided by V-JEPA 2.1. The ethical implications of deploying such powerful, physically aware AI systems will also require careful consideration to ensure responsible development and deployment.

In a move that underscores their commitment to open science and collaborative progress, the researchers have made their code and pretrained models publicly available on GitHub. This generous contribution is intended to facilitate further research and accelerate the development of new applications within the broader AI community. As the team aptly notes, "We hope that these contributions will foster research in learning strong representations for physical world modelling, while empowering many applications in video understanding." V-JEPA 2.1 is not just a technological achievement; it is a catalyst for the next generation of AI that truly understands and navigates our complex world.