The paradox of LLM self-distillation: Faster reasoning, weaker generalization – TechTalks

The Rise of Self-Distillation in LLM Optimization

The quest for more efficient and performant large language models has driven significant innovation in AI research. Among the various techniques developed, knowledge distillation has emerged as a powerful paradigm. Traditionally, knowledge distillation involves transferring knowledge from a large, high-performing "teacher" model to a smaller, more efficient "student" model. This process aims to achieve comparable performance with reduced computational cost and faster inference times, making LLMs more accessible and deployable.

Self-distillation, a specialized variant of this technique, takes an even more intriguing approach. Instead of relying on a separate, larger teacher model, self-distillation uses two instances of the same model. One instance acts as the student, generating reasoning sequences based on a standard input prompt. The other instance, the teacher, is provided with a "privileged" context, such as the ground-truth solution, environmental feedback, or other auxiliary signals that are unavailable to the student during inference. By minimizing the divergence between the student’s and teacher’s next-token distributions, the student model is trained to internalize the "hints" derived from this rich context. The goal is for the model to learn to produce concise, confident, and correct reasoning trajectories without requiring an external teacher or these privileged signals at inference time.

This method has shown remarkable promise, especially when combined with techniques like Reinforcement Learning from Verifiable Rewards (RLVR). RLVR, a popular training technique, rewards models when their final output matches an objectively correct answer. The synergy between self-distillation and RLVR has led to impressive performance gains in specific agentic environments and scientific reasoning domains. In these controlled settings, models achieve higher accuracy while compressing the reasoning process, leading to shorter and more effective responses. This efficiency is highly desirable, as it translates directly into lower computational overhead and faster user interactions.

Unveiling a Critical Flaw: The Generalization Problem

Despite these successes, the recent study by Microsoft Research, KAIST, and Seoul National University reveals a significant limitation: self-distillation’s effectiveness does not uniformly extend across all cognitive tasks, particularly those requiring strong out-of-distribution generalization. The researchers found that in mathematical reasoning, self-distillation inadvertently suppresses crucial behaviors that enable LLMs to explore alternative hypotheses and self-correct during complex problem-solving. This suppression renders the models significantly less accurate when encountering problems outside their training distribution.

The core takeaway from this research is stark: optimizing post-training solely to reinforce concise, correct reasoning traces can subtly but profoundly damage a model’s inherent ability to generalize. Across a variety of open-weight models, the study documented performance drops of up to 40% on unseen tasks following self-distillation. This finding suggests that for LLMs to retain their robust reasoning capabilities, they must be exposed to and allowed to express varying levels of uncertainty during their training and reasoning processes.

Experimental Design and Key Findings

To systematically investigate the impact of self-distillation on mathematical problem-solving, the research team conducted extensive experiments using several prominent open-weight language models. These included a distilled 7B version of DeepSeek-R1, Qwen3-8B, and Olmo3-7B-Instruct. The choice of diverse models aimed to ensure the robustness and generalizability of their findings across different architectures and pre-training methodologies.

The models underwent training using the DAPO-Math-17k dataset, a comprehensive collection of thousands of mathematical problems. This dataset provided a rich environment for teaching the models foundational and advanced mathematical concepts. However, the true test of the models’ capabilities lay in their performance on out-of-distribution generalization benchmarks. For this, the fine-tuned checkpoints were evaluated on unseen or significantly more challenging math benchmarks, including AIME24, AIME25, AMC23, and MATH500. These benchmarks are renowned for their difficulty and for testing an LLM’s ability to apply learned principles to novel problem structures, rather than merely recalling memorized solutions.

The researchers compared two primary reinforcement learning approaches: Group Relative Policy Optimization (GRPO) and Reinforcement Learning via Self-Distillation (SDPO). GRPO served as a baseline, representing a more traditional policy optimization method. SDPO, on the other hand, embodied the self-distillation approach. Additionally, off-policy supervised fine-tuning experiments were conducted, contrasting models trained on standard, unguided responses against those trained on concise, solution-guided responses—a key characteristic of self-distilled outputs.

The results were unequivocal:

- GRPO Performance: The baseline GRPO consistently yielded modest performance gains on the out-of-distribution benchmarks and, notably, prompted a slight increase in response length. This indicated a more exploratory and verbose reasoning process, which seemed beneficial for generalization.

- SDPO Performance Degradation: In stark contrast, SDPO resulted in a sharp drop in response length, a characteristic often hailed as an advantage of distillation. However, this conciseness came at a severe cost: substantial performance degradation. Models trained with SDPO experienced a performance plummet of approximately 40% on the AIME24 benchmark and 15% on AMC23. The off-policy experiments corroborated this trend, demonstrating that training on concise, solution-guided trajectories drastically degraded benchmark scores, even when the training dataset consisted entirely of correct mathematical traces. This implied that the method of arriving at the solution, not just the correctness of the solution, was critical.

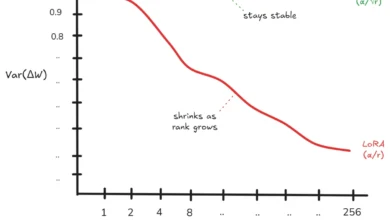

Further analysis involved manipulating the size and diversity of the training tasks. When trained on a small number of questions (1 to 128 problems), SDPO initially appeared highly efficient, achieving high training scores while compressing response lengths by up to eight times compared to GRPO. This initial success likely fueled the widespread adoption of self-distillation. However, as the task coverage expanded to include hundreds or thousands of diverse problems, the dynamic entirely reversed. GRPO’s out-of-distribution performance scaled consistently with dataset growth, demonstrating its robustness. SDPO, however, struggled profoundly to accommodate the broader range of reasoning patterns, leading to severe performance drops on evaluation benchmarks when trained on larger, more varied problem sets.

The Crucial Role of Epistemic Verbalization

To pinpoint the underlying cause of these dramatic performance drops, the researchers delved into the concept of "epistemic verbalization." This refers to the model explicitly expressing uncertainty during its reasoning process through specific tokens or phrases such as "wait," "hmm," "perhaps," "maybe," or re-evaluating previous steps.

The conventional understanding of LLMs is that they do not plan their entire answer in advance but rather calculate probabilities sequentially, token by token. In this context, tokens like "wait" or "perhaps" are not merely filler words but function as vital computational steps. They signify moments where the model is exploring multiple possibilities, re-evaluating its current trajectory, or maintaining alternative hypotheses before committing to a final path. This process supports a gradual reduction of uncertainty, allowing for iterative refinement of beliefs.

The study found that when a model is allowed to verbalize uncertainty, it effectively navigates complex problem spaces, exploring different avenues and self-correcting as needed. Conversely, when this behavior is artificially suppressed, the model loses its capacity for iterative refinement. It prematurely commits to potentially incorrect hypotheses with limited opportunity for recovery or self-correction, much like a human rushing to a conclusion without fully exploring the problem space.

Self-distillation, by its very nature, suppresses these epistemic signals. The teacher model, guided by highly informative context (often the final correct solution), generates a reasoning trajectory filled with strong hints and minimal expressed uncertainty. When the student model is forced to mimic this output, the training process inadvertently encourages it to adopt a highly confident reasoning style that presupposes information it lacks at inference time. As the conditioning context provided to the teacher becomes richer, the model generates answers with greater confidence and systematically strips away its own epistemic verbalizations. This "trained removal of uncertainty" has profound implications for out-of-distribution generalization.

Broader Impact and Implications for LLM Development

This research carries significant implications for the design, training, and deployment of large language models across various industries. The value of epistemic verbalization, and thus the potential harm of its suppression, scales directly with the generalization demands of a task.

In domains where task coverage is narrow, highly repetitive, or extremely familiar, suppressing uncertainty might indeed enable rapid optimization and efficiency. For instance, the researchers observed that self-distillation remains highly effective in specific scientific domains like chemistry or specialized coding environments. In these datasets, the underlying problem structures often remain very similar, even if surface details change. In such precise scenarios, explicit expressions of uncertainty are largely redundant. Their removal can make responses faster, more streamlined, and potentially more accurate by eliminating unnecessary exploration. This makes self-distillation a viable, even preferable, strategy for highly specialized, domain-specific applications where inference speed and cost are paramount and OOD challenges are minimal.

However, for broad, complex domains that demand strong out-of-distribution generalization—where models must handle a vast array of unseen, non-overlapping problem types—relying heavily on self-distillation becomes counterproductive. When a model needs to adapt to novel challenges, preserving its ability to express uncertainty and iteratively refine its beliefs is critical for success. If self-distillation is applied to these expansive problem sets, the aggressive, trained removal of epistemic signals acts as a straitjacket. It actively interferes with the model’s ability to adapt, fundamentally capping its reasoning potential and leading to a significant degradation in performance on tasks requiring genuine understanding and flexible problem-solving.

This discovery prompts a critical re-evaluation of current LLM training paradigms. Developers and researchers are now faced with a clearer, albeit more complex, trade-off: response efficiency versus generalizable reasoning capability. The pursuit of highly concise and confident outputs, while appealing for its immediate benefits, may be inadvertently sacrificing the very cognitive flexibility that makes LLMs powerful tools for addressing complex, real-world problems.

Future research will likely focus on developing more nuanced distillation techniques that preserve epistemic verbalization or introduce mechanisms for controlled uncertainty expression. Hybrid approaches, combining the efficiency gains of distillation with methods that encourage exploratory reasoning, might offer a path forward. Furthermore, the development of evaluation benchmarks that explicitly measure a model’s capacity for self-correction and uncertainty handling will be crucial in guiding the next generation of LLM optimization strategies.

In conclusion, while self-distillation offers impressive benefits in compressing model responses and reducing inference costs, it is not a universally applicable panacea. Its deployment must be carefully considered based on the specific requirements of the task. For highly specialized, repetitive tasks, it can be a powerful tool. But for complex, open-ended problems demanding robust generalization, preserving a model’s capacity for uncertainty and self-correction is paramount, even if it means sacrificing some degree of conciseness and immediate efficiency. The path to truly intelligent AI systems lies not just in efficiency, but in fostering and understanding their emergent cognitive abilities.